Orthogonality and Symmetric Matrices and the SVD

- Overview

- Course Content

- Requirements & Materials

Orthogonality and Symmetric Matrices and the SVD

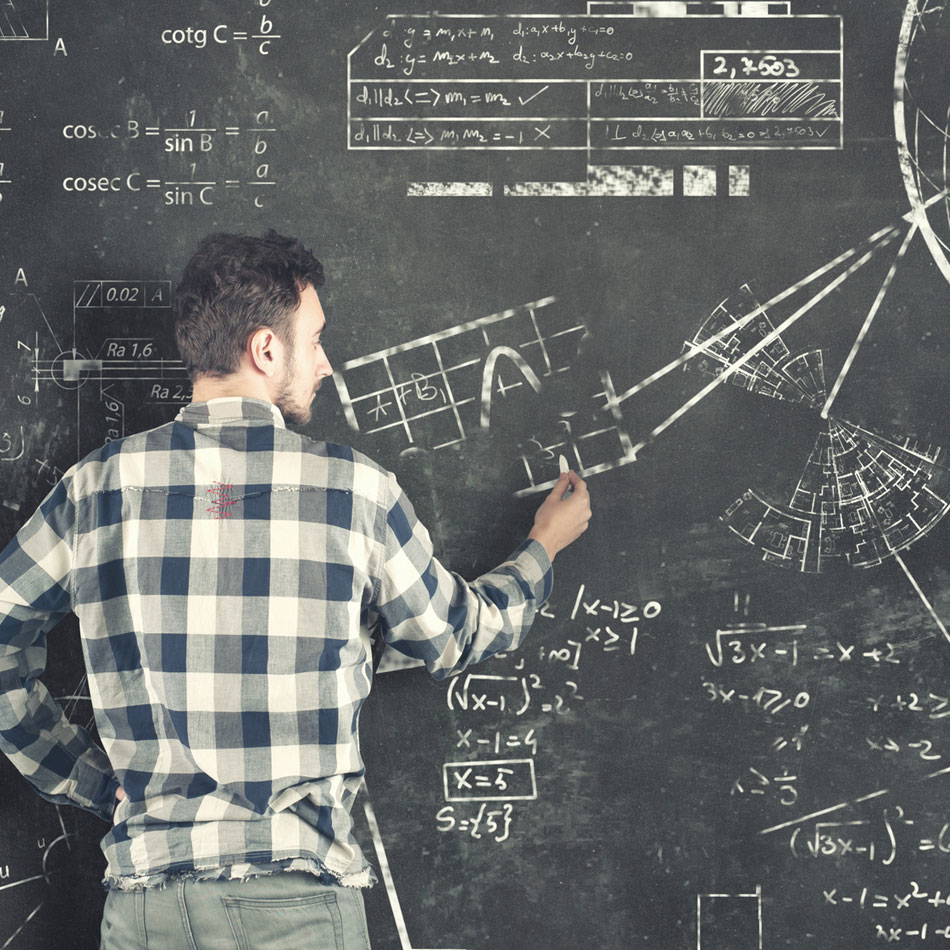

In the first part of this course you will explore methods to compute an approximate solution to an inconsistent system of equations that have no solutions. Our overall approach is to center our algorithms on the concept of distance. To this end, you will first tackle the ideas of distance and orthogonality in a vector space. You will then apply orthogonality to identify the point within a subspace that is nearest to a point outside of it. This has a central role in the understanding of solutions to inconsistent systems. By taking the subspace to be the column space of a matrix, you will develop a method for producing approximate (“least-squares”) solutions for inconsistent systems.

You will then explore another application of orthogonal projections: creating a matrix factorization widely used in practical applications of linear algebra. The remaining sections examine some of the many least-squares problems that arise in applications, including the least squares procedure with more general polynomials and functions.

This course then turns to symmetric matrices. arise more often in applications, in one way or another, than any other major class of matrices. You will construct the diagonalization of a symmetric matrix, which gives a basis for the remainder of the course.

INNER PRODUCT, LENGTH, AND ORTHOGONALITY

ORTHOGONAL SETS

ORTHOGONAL PROJECTIONS

THE GRAM-SCHMIDT PROCESS

LEAST-SQUARES PROBLEMS

LEAST-SQUARES AND LINEAR MODELS

DIAGONALIZATION OF SYMMETRIC MATRICES

QUADRATIC FORMS

CONSTRAINED OPTIMIZATION

THE SINGULAR VALUE DECOMPOSITION

Recommended

- High school algebra, geometry, and pre-calculus

Required

- Linear Equations (DL 0050M)

- Matrix Algebra (DL 0051M)

- Determinants and Eigenvalues (DL 0049M)

Required

- Internet connection (DSL, LAN, or cable connection desirable)

- Adobe Acrobat PDF reader (to download for free, visit get.adobe.com/reader/)

Session Details

Who Should Attend

This course is designed for undergraduate students, advanced high school students, who are interested in pursuing any career path or degree program that involves linear algebra, or industry employees who are seeking a better understanding of linear algebra for their career development.

What You Will Learn

- Orthogonal projections and distances to express a vector as a linear combination of orthogonal vectors

- How to construct vector approximations using projections

- How to characterize bases for subspaces, and construct orthonormal bases

- The iterative Gram Schmidt Process

- The QR decomposition

- Orthogonal basis construction

- How to compute general solutions and least squares errors to least squares problems using the normal equations and the QR decomposition

- How to apply least-squares and multiple regression to construct a linear model from a set of data points

- A spectral decomposition of a matrix

- Quadratic forms using eigenvalues and eigenvectors

- The SVD for a rectangular matrix

How You Will Benefit

- Apply theorems related to orthogonality and least-squares to construct mathematical models for real-world data .

- Apply the Singular Value Decomposition (SVD) to characterize the structure of a matrix and its invertibility.

- Apply theorems related to orthogonal complements, and their relationships to Row and Null space, to characterize vectors and linear systems.

- Apply eigenvalues and eigenvectors to solve optimization problems that are subject to distance and orthogonality constraints.

- Analyze mathematical statements and expressions involving linear systems and matrices. For example, to describe how well a mathematical model fits measured data.

-

Taught by Experts in the Field

-

Grow Your Professional Network

The course schedule was well-structured with a mix of lectures, class discussions, and hands-on exercises led by knowledgeable and engaging instructors.

Related Programs

The Georgia Tech Global Learning Center and Georgia Tech-Savannah campus is compliant under the Americans with Disabilities Act. Any individual who requires accommodation for participation in any course offered by GTPE should contact us prior to the start of the course.

Courses that are part of certificate programs include a required assessment. Passing criteria is determined by the instructor and is provided to learners at the start of the course.

CEUs are awarded to participants who attend a minimum of 80% of the scheduled class time.

Georgia Tech’s Office of Research Security and Compliance requires citizenship information be maintained for those participating in most GTPE courses. Citizenship information is obtained directly from the learner at the time of registration and is maintained in the Georgia Tech Student System.

Learners enrolled in any of Georgia Tech Professional Education's programs are considered members of the Georgia Tech community and are expected to comply with all policies and procedures put forth by the Institute, including the Student Code of Conduct and Academic Honor Code.

Please refer to our Terms and Conditions for complete details on the policies for course changes and cancellations.

Participants in GTPE courses are required to complete an online profile that meets the requirements of Georgia Tech Research Security. Information collected is maintained in the Georgia Tech Student System. The following data elements are considered directory information and are collected from each participant as part of the registration and profile setup process:

- Full legal name

- Email address

- Shipping address

- Company name

This data is not published in Georgia Tech’s online directory system and therefore is not currently available to the general public. Learner information is used only as described in our Privacy Policy. GTPE data is not sold or provided to external entities.

Sensitive Data

The following data elements, if in the Georgia Tech Student Systems, are considered sensitive information and are only available to Georgia Tech employees with a business need-to-know:

- Georgia Tech ID

- Date of birth

- Citizenship

- Gender

- Ethnicity

- Religious preferences

- Social security numbers

- Registration information

- Class schedules

- Attendance records

- Academic history

At any time, you can remove your consent to marketing emails as well as request to delete your personal data. Visit our GTPE EU GDPR page for more information.

Classes and events being held at the Georgia Tech Global Learning Center in Atlanta or Georgia Tech-Savannah campus may be impacted by closures or delays due to inclement weather.

The Georgia Tech Global Learning Center will follow the guidelines of Georgia Tech main campus in Atlanta. Students, guests, and instructors should check the Georgia Tech homepage for information regarding university closings or delayed openings due to inclement weather. Please be advised that if campus is closed for any reasons, all classroom courses are also canceled.

Students, guests, and instructors attending classes and events at Georgia Tech-Savannah should check the Georgia Tech-Savannah homepage for information regarding closings or delayed openings due to inclement weather.

GTPE certificates of program completion consist of a prescribed number of required and elective courses offered and completed at Georgia Tech within a consecutive six-year period. Exceptions, such as requests for substitutions or credit for prior education, can be requested through the petition form. Exceptions cannot be guaranteed.

Please refer to our Terms and Conditions for complete details on the policy for refunds.

Georgia Tech is a tobacco-free and smoke-free campus. The use of cigarettes, cigars, pipes, all forms of smokeless tobacco, and any other smoking devices that use tobacco are strictly prohibited. There are no designated smoking areas on campus.

Courses that are eligible for special discounts will be noted accordingly on the course page. Only one coupon code can be entered during the checkout process and cannot be redeemed after checkout is complete. If you have already registered and forgot to use your coupon code, you can request an eligible refund. GTPE will cancel any transaction where a coupon was misused or ineligible. If you are unsure if you can use your coupon code, please check with the course administrator.

GTPE does not have a program for senior citizens. However, Georgia Tech offers a 62 or Older Program for Georgia residents who are 62 or older and are interested in taking for credit courses. This program does not pay for noncredit professional education courses. Visit the Georgia Tech Undergraduate Admissions page for more information on the undergraduate program and Georgia Tech Graduate Admissions page for more information on the graduate program.

Designed for an online audience, MOOCs are available to anyone with an internet connection and are free to enroll. Some MOOCs can be started any time – others at regular intervals – and range in length from a few weeks to a few months to complete. You’ll have access to a wide range of online media and interactive tools, including video lectures, class exercises, discussions, and assessments.

Anyone with an internet connection can enroll. Some courses may be unavailable in a small number of countries because of trade restrictions or government policies.

Most courses are free, though there is a small fee if you opt to work towards a certificate of completion. Some courses count toward university credit—and some, like our online master’s program in computer science, offer a full degree. These credit-bearing courses do have fees and applications associated with them.

Yes, Georgia Tech offers CEUs for some completed MOOC courses taken through Coursera and edX. You have the option of purchasing CEUs after earning a verified course certificate.

A digital badge is an acknowledgement that you've successfully completed a MOOC course. You can display your digital badge on your online profiles so that colleagues and employers can see your achievements at a glance.

You can earn CEUs, digital badges, and verified certificates of completion. You can also use MOOCs as an alternate pathway to enter Georgia Tech master's programs through The Analytics: Essential Tools and Methods MicroMasters and the Online Master's in Computer Science.

Certificates of completion are issued by the online provider, Coursera or edX. Although they are a great way to showcase your skills, they are not the same as official academic credit from Georgia Tech. However, if you purchase CEUs (which are denoted by a badge), then you can request an official GTPE transcript for free.

TRAIN AT YOUR LOCATION

We enable employers to provide specialized, on-location training on their own timetables. Our world-renowned experts can create unique content that meets your employees' specific needs. We also have the ability to deliver courses via web conferencing or on-demand online videos. For 15 or more students, it is more cost-effective for us to come to you.

-

Save Money

-

Flexible Schedule

-

Group Training

-

Customize Content

-

On-Site Training

-

Earn a Certificate